The Programmable Enterprise

Enabling organizations for future innovation and agility though APIs

Salesforce continues to grow at an astonishing rate of 30% year over year. With that growth comes an inevitable expansion of data silos across the enterprise—further compounded by acquisitions and the integration of new business units into the broader Salesforce ecosystem. To support a $20B company and beyond, our data integration infrastructure must evolve. Modernization is no longer optional; it is a strategic imperative to scale the business, enable innovation, and control operating costs.

My team’s vision is clear: connect the business to trusted data—securely, reliably, and in real time—so that teams can access what they need, when and where they need it. This is foundational to becoming a truly data-driven enterprise. This document outlines how we achieve that vision through a modern, scalable integration architecture.

Target End State

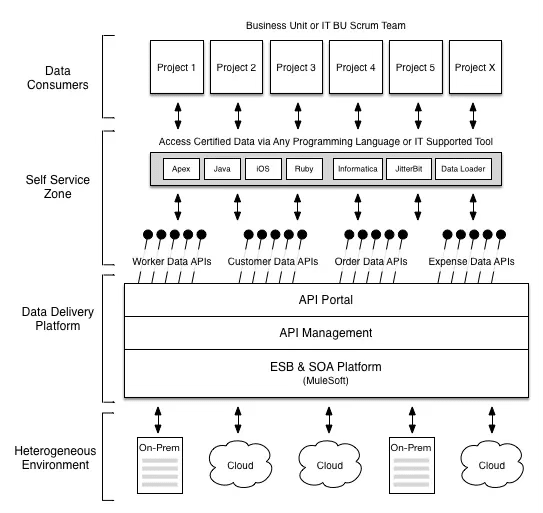

The target end state represents a modernized data integration platform designed to deliver Data-as-a-Service (DaaS) at enterprise scale. At its core, data integration is about the efficient and reliable movement of data between systems. However, to truly enable business agility, this capability must evolve into a platform that delivers data as a consumable service.

The broader industry direction reinforces this approach: real-time data access, REST-based APIs, and self-service consumption models are rapidly becoming the standard. By aligning to these principles, we position the organization to leverage existing standards, adopt emerging ones such as OData, and unlock new opportunities through faster, more intelligent use of data.

The reference architecture illustrates the end-to-end lifecycle of data—from publisher to consumer—providing a holistic view of how data flows across the enterprise. Understanding this lifecycle is critical to designing a platform that is both scalable and resilient.

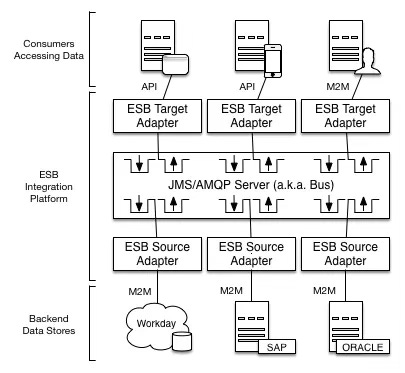

ESB & SOA

At the foundation of the platform is the ESB and SOA layer, which serves as the operational backbone of the integration architecture. This layer addresses many of the limitations inherent in point-to-point models by centralizing connectivity, enabling real-time data routing, supporting data aggregation, and decoupling systems.

From a leadership perspective, this is where standardization and reuse begin to take hold. Rather than building bespoke integrations for each use case, this layer establishes a common framework for how systems communicate—reducing complexity and enabling scale.

Adaptors

Within this architecture, ESB adapters play a critical role. Each adapter is designed with a single responsibility—either interfacing with a source system or a target system—while encapsulating the logic required for that interaction.

For example, retrieving employee data from Workday would involve a source adapter responsible for querying the system, transforming the data into a Canonical Data Model (CDM), and publishing it to the Message Exchange. Target adapters then independently consume that data and deliver it to downstream systems.

This modular approach localizes change, reduces duplication, and significantly improves maintainability across the integration landscape.

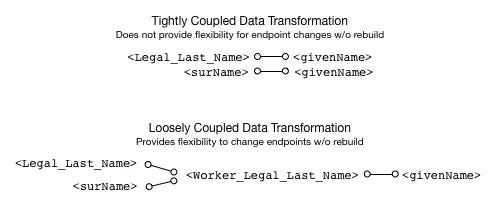

Canonical Data Model

The Canonical Data Model (CDM) is a cornerstone of the architecture. Its primary purpose is to decouple source and target systems by introducing a standardized data representation across the enterprise.

Without a CDM, integrations become tightly coupled, requiring direct mappings between systems. This creates fragility and high cost when systems change. For example, a simple attribute change in a source system can cascade into widespread integration rework.

By contrast, a CDM introduces an intermediary layer. Source systems map to the canonical model, and target systems consume from it. This isolates change and dramatically reduces the impact of system migrations or upgrades. It is a foundational capability for achieving true enterprise agility.

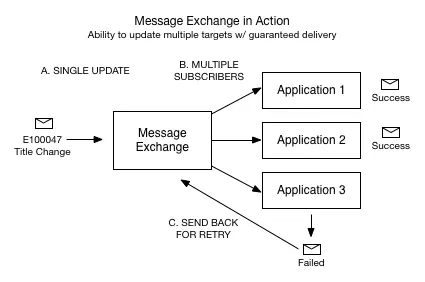

Message Exchange

The Message Exchange (ME) provides real-time data distribution across the platform. It acts as a broker, enabling source systems to publish events and target systems to subscribe to them.

This model introduces several key advantages. First, it supports a publish-and-subscribe pattern, allowing new consumers to be added without modifying existing integrations. Second, it ensures reliable message delivery. If a downstream system is unavailable, messages are queued and retried, or routed for manual intervention if necessary.

This is a significant advancement over P2P architectures, where failures often require reprocessing entire datasets. The ME enables resilience, scalability, and operational efficiency in how data flows across the enterprise.

Taken together, these components—adapters, canonical data model, and message exchange—form the foundation of a loosely coupled integration architecture. This is what enables flexibility, reduces cost, and allows the enterprise to evolve without constant rework.

However, while SOA provides a strong foundation, it is not sufficient on its own to meet the demands of modern digital businesses. To truly unlock agility, data must be made directly accessible to those who need it.

APIs & API Management

APIs are the mechanism through which data becomes accessible, consumable, and actionable. They extend the value of the integration platform by placing data directly into the hands of developers, applications, and ultimately, the business.

From a strategic standpoint, APIs enable both operational efficiency and new business models. Internally, they accelerate development and simplify access to complex data. Externally, they create opportunities to monetize data and expand digital ecosystems.

Within the enterprise, APIs typically fall into three categories:

Aggregation APIs: Simplify access by combining data from multiple systems into a single, optimized interface. For example, a Worker API that aggregates data from Workday, Fieldglass, and Supportforce into a unified view.

Internal Application APIs: Expose functionality from internally developed systems, enabling reuse and service-oriented design.

Packaged APIs: Provided by third-party platforms to expose application capabilities.

As the number of APIs grows, API management becomes essential to ensure scalability, security, and governance.

Security

Exposing data through APIs introduces risk, making security a top priority. API management platforms provide robust mechanisms such as OAuth2 for authentication and authorization, along with protections against threats such as denial-of-service attacks and payload-based vulnerabilities.

Equally important is the ability to quickly revoke access if a compromise occurs—ensuring that risk can be contained in real time.

Policies

API policies enable control over how APIs are consumed. Capabilities such as rate limiting, traffic management, and content-based routing ensure consistent performance and protect backend systems from overload.

These controls are essential to maintaining reliability as adoption scales.

Proxies

API proxies provide a layer of abstraction between consumers and backend systems. This decoupling allows backend services to evolve without impacting API consumers, enabling faster innovation and reducing dependency-related risk.

Analytics

APIs introduce a new dimension of visibility. With analytics, organizations can monitor usage patterns, understand demand, and enforce service-level agreements. This data-driven insight is critical for managing APIs as products and ensuring they deliver measurable value.

Self-Service API Portal

The final step in delivering Data-as-a-Service is enabling self-service access through an API portal. This provides a centralized interface for discovering, accessing, and managing APIs.

Through the portal, developers can explore available APIs, review documentation, request access, and begin integration quickly—without friction or dependency on centralized teams. This model significantly accelerates development cycles and empowers innovation at scale.

Consider a simple example. A developer building a mobile employee directory needs access to worker data. Rather than integrating with multiple systems and managing disparate data models, the developer can discover a unified Worker API through the portal, request access, and begin development immediately. The complexity of aggregation, transformation, and governance is abstracted away—allowing the developer to focus on delivering business value.

This is the essence of Data-as-a-Service: simplifying access, increasing speed, and enabling the organization to fully leverage its data assets.